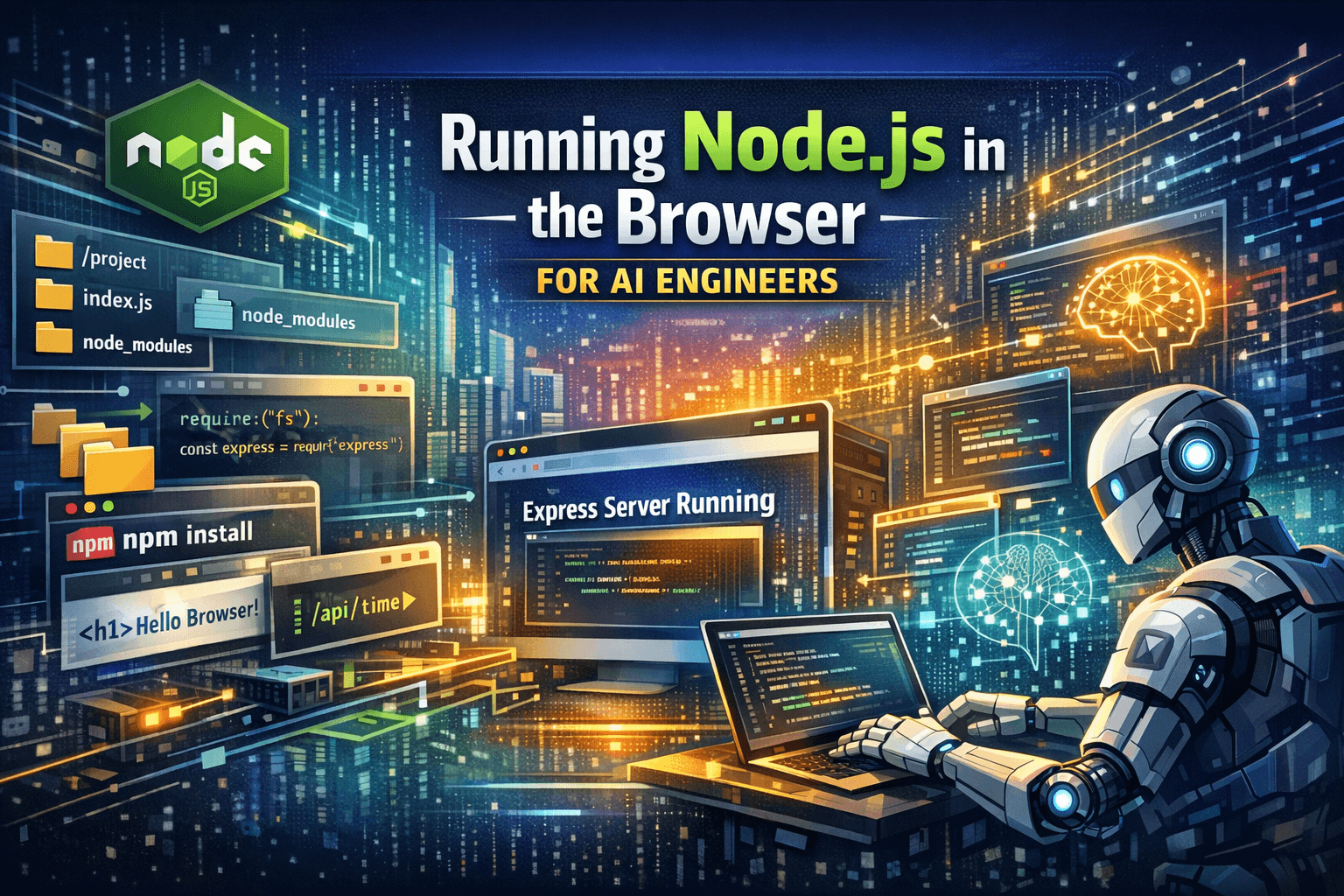

Running Node.js in the Browser — Why AI Engineers Should Pay Attention to Almostnode

We've always treated the runtime boundary as fixed:

Browser → UI

Node.js → Backend

AI → Cloud inference + remote execution

Almostnode challenges that assumption. It enables a Node.js-compatible runtime inside the browser, including a virtual filesystem, core module shims, npm installs, and framework support like Express — without a backend server.

This is not a gimmick. For engineers building AI-powered developer tools, this changes architectural tradeoffs.

What "Node.js in the Browser" Actually Means

Almostnode provides:

In-memory virtual filesystem

Shimmed Node core modules (fs, path, http, crypto, etc.)

Client-side npm installation

Dev server behavior in-browser

Support for frameworks like Express

There is no container, no VM, no server process. Everything executes inside the browser runtime.

Why This Matters for AI Systems

AI coding tools increasingly need to:

Generate code

Execute it

Inspect output

Modify it

Repeat

Today, this usually requires:

Remote sandboxes

Container orchestration

Security isolation

Scaling infrastructure

With Almostnode, execution can happen locally in the browser, removing backend execution from the equation. For AI-native tooling, that's a meaningful shift.

Practical AI Use Cases

1. Zero-Infrastructure Code Execution

An AI assistant generates:

const express = require("express");

const app = express();

app.get("/", (req, res) => {

res.send("Running inside the browser");

});

app.listen(3000);

In a traditional setup:

Code is sent to a remote executor

Container spins up

Server boots

Output is proxied back

With Almostnode:

AI writes files into the virtual filesystem

Dependencies install client-side

Server runs in-browser

No backend required.

2. AI-Powered Interactive Documentation

Static examples are limiting. With a browser-native Node runtime:

User: "Add request validation." AI:

Modifies files

Installs a package

Restarts the server

Shows updated behavior instantly

Documentation becomes executable and AI-adaptable. This is particularly relevant for:

DevRel teams

SDK documentation

AI-assisted onboarding experiences

3. Safe AI Code Sandboxing

Running arbitrary AI-generated code on servers introduces:

Security concerns

Resource management complexity

Infrastructure cost

Running it inside the browser:

Constrains execution scope

Leverages browser sandboxing

Removes server-side risk surface

For playground-style AI tools, this is significantly simpler.

4. Multi-File AI App Generation

Because Almostnode supports real file structures, AI can generate full project layouts:

/index.js

/routes/users.js

/middleware/auth.js

/package.json

It can:

Write multiple files

Install dependencies

Boot the app

Iterate based on output

That enables AI-driven:

Coding assessments

Backend learning environments

Interactive tutorials

Demo systems

Without provisioning infrastructure.

5. Iterative AI Agents in the Browser

An autonomous coding loop typically looks like:

Generate code

Execute

Observe output

Modify

Repeat

Almostnode allows this loop entirely client-side. For AI agent experimentation, that dramatically reduces setup friction.

Essential Technical Concepts

Virtual Filesystem

An in-memory filesystem enables:

Real file hierarchies

Module resolution

File watching

Multi-file projects

This is critical for realistic AI code generation.

Core Module Shims

Node modules like fs, http, crypto are implemented using browser-compatible mechanisms. This enables server-style code to execute without OS-level access.

Client-Side npm Installation

AI can dynamically install dependencies:

User: "Add zod for validation." AI:

Installs package

Refactors code

Restarts app

No backend pipeline required.

What This Is Not

Engineers should be clear about constraints:

Not full Node.js parity

No native modules

No raw TCP sockets

Not production backend infrastructure

This is best suited for:

Tooling

Education

AI playgrounds

Prototyping

Interactive developer experiences

Architectural Comparison

Traditional AI execution model: User → AI → Remote Container → Result

Browser-native model with Almostnode: User → AI → Browser Runtime → Result

The second removes:

Container orchestration

Cold starts

Execution infrastructure

Ongoing compute cost

For teams building AI developer products, that simplification is non-trivial.

Who Should Explore This

Engineers building AI coding copilots

Teams designing AI-driven documentation systems

Platforms creating coding sandboxes

AI agent researchers experimenting with local execution loops

If your system needs to generate and run Node.js code — but you want to avoid server-side execution complexity — this is worth exploring.

Closing Perspective

Almostnode does not replace Node.js servers. It redefines where Node.js-compatible execution can happen.

For AI engineers, the implication is straightforward: You can move the execution boundary from the server to the browser — and simplify your system architecture in the process.

That's not just convenient. It changes how AI-native developer tools can be built.