From SDLC to ADLC: How AI Agents Are Rewiring Software Development

Key differences and important considerations

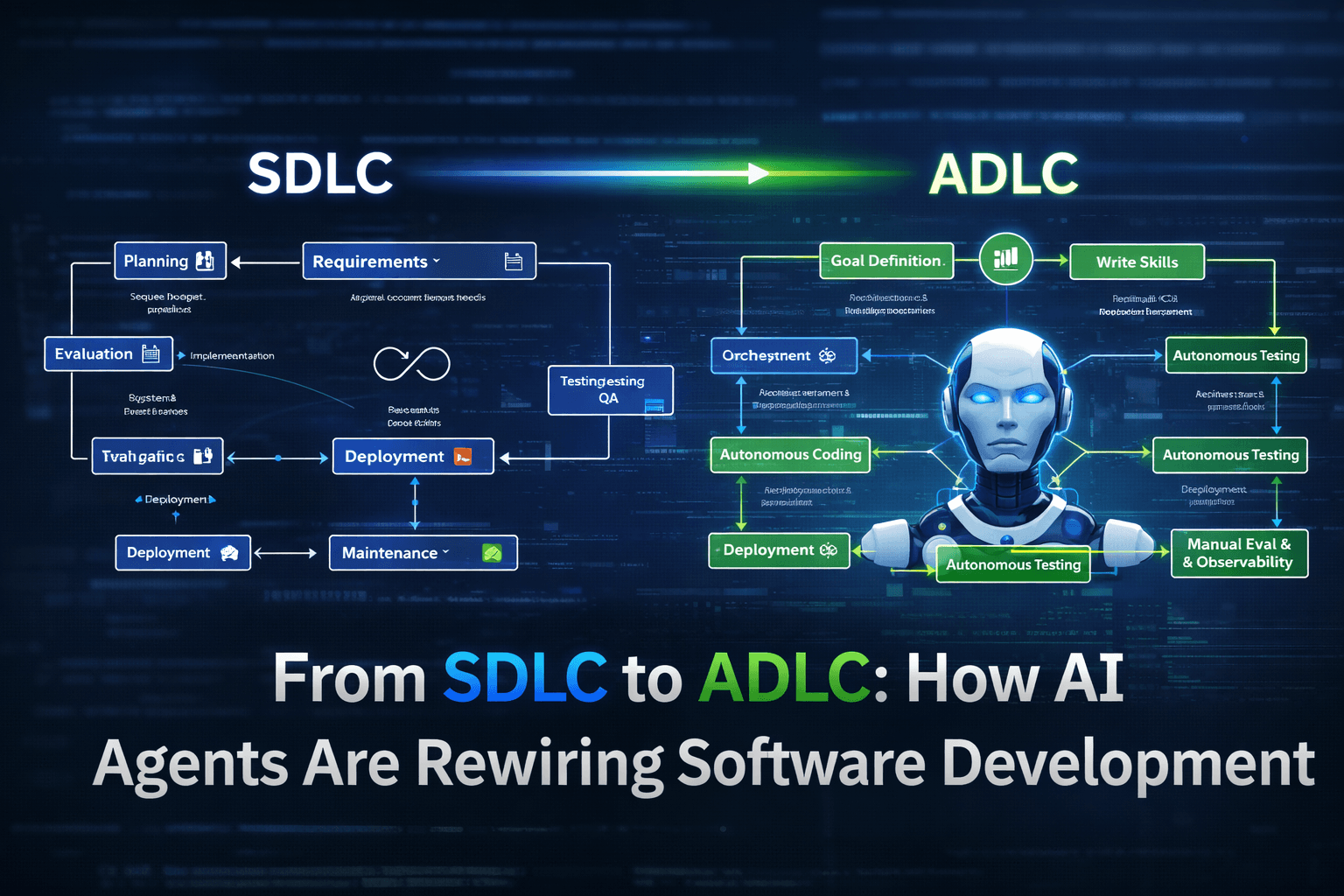

The software development landscape is undergoing a fundamental shift. For decades, the Software Development Life Cycle (SDLC) has provided the structural backbone for building software—eight well-defined phases that guide teams from initial planning through final maintenance. Yet as codebase complexity grows and delivery expectations accelerate, the limitations of this traditional model become increasingly apparent. A new paradigm is emerging: the Agent-Driven Development Life Cycle (ADLC), where AI agents become active participants in the development process rather than mere tools. This article examines both paradigms, their differences, and what the shift means for development teams.

Traditional SDLC: The Eight-Phase Model

The traditional SDLC provides a sequential, documentation-heavy approach to software development. Understanding its eight phases is essential to appreciate both its strengths and its constraints.

The Eight Phases

Planning — Project managers and stakeholders define the overall scope, objectives, and feasibility. Resource allocation, timelines, and budget estimates are established. This phase answers the question: "Should we build this?"

Requirements — Analysts work with stakeholders to gather and document detailed functional and non-functional requirements. The output is typically a Requirements Specification Document (SRS) that serves as the contractual basis for development.

Design — Architects and senior developers create the system design, including high-level architecture, database schemas, API designs, and UI mockups. This phase produces the Technical Design Specification that guides implementation.

Implementation — Developers write actual code based on the design documents. This is often the longest phase, where individual components are built according to specified requirements.

Testing — QA teams execute various testing phases—unit testing, integration testing, system testing, and acceptance testing. Bugs are identified, logged, and fixed in cycles that can extend timelines significantly.

Deployment — The validated software is released to production environments. This includes configuration management, environment setup, and often involves DevOps practices for continuous deployment.

Maintenance — Post-deployment, the team addresses bug fixes, performance issues, security patches, and feature enhancements. Maintenance often consumes the largest portion of a software system's lifecycle cost.

Evaluation — Teams conduct post-mortems to assess what worked, what didn't, and how future projects can improve. Lessons learned feed back into organizational processes.

This structured approach brings discipline and predictability. Large enterprises, particularly in regulated industries like banking and healthcare, still rely on SDLC for its audit trails, clear hand-offs, and risk mitigation.

SDLC Limitations

Despite its widespread adoption, traditional SDLC struggles in modern development contexts. Several core limitations constrain velocity and adaptability.

Slow Development Cycles

SDLC's sequential nature creates inherent delays. Each phase must substantially complete before the next begins. Requirements must be "frozen" before design begins; design must be finalized before implementation starts. In fast-moving markets, months-long cycles mean delivered software addresses yesterday's problems rather than today's.

Sequential Phase Dependencies

The phase-gate model creates bottlenecks. A single requirement ambiguity can halt design. A design flaw discovered late in testing requires costly rework across multiple phases. Teams can't parallelize work effectively because downstream phases depend on upstream deliverables.

Late Testing

Testing typically occurs after implementation is complete. The NIST study on software defects found that fixing a defect in production costs 30-100 times more than fixing it during design. Yet SDLC structurally pushes testing toward the end of the lifecycle—when it's most expensive to make changes.

Communication Overhead

Each phase transition requires formal sign-offs, documentation updates, and stakeholder alignment. As projects scale, coordination costs grow quadratically. Misaligned documentation between phases is a common source of defects—developers implement what the document says, not necessarily what stakeholders intended.

Inflexibility to Change

Once requirements are frozen and design is complete, introducing changes triggers formal change control processes. This rigidity conflicts with modern expectations of rapid iteration and continuous delivery.

ADLC: The Agent-Driven Development Lifecycle

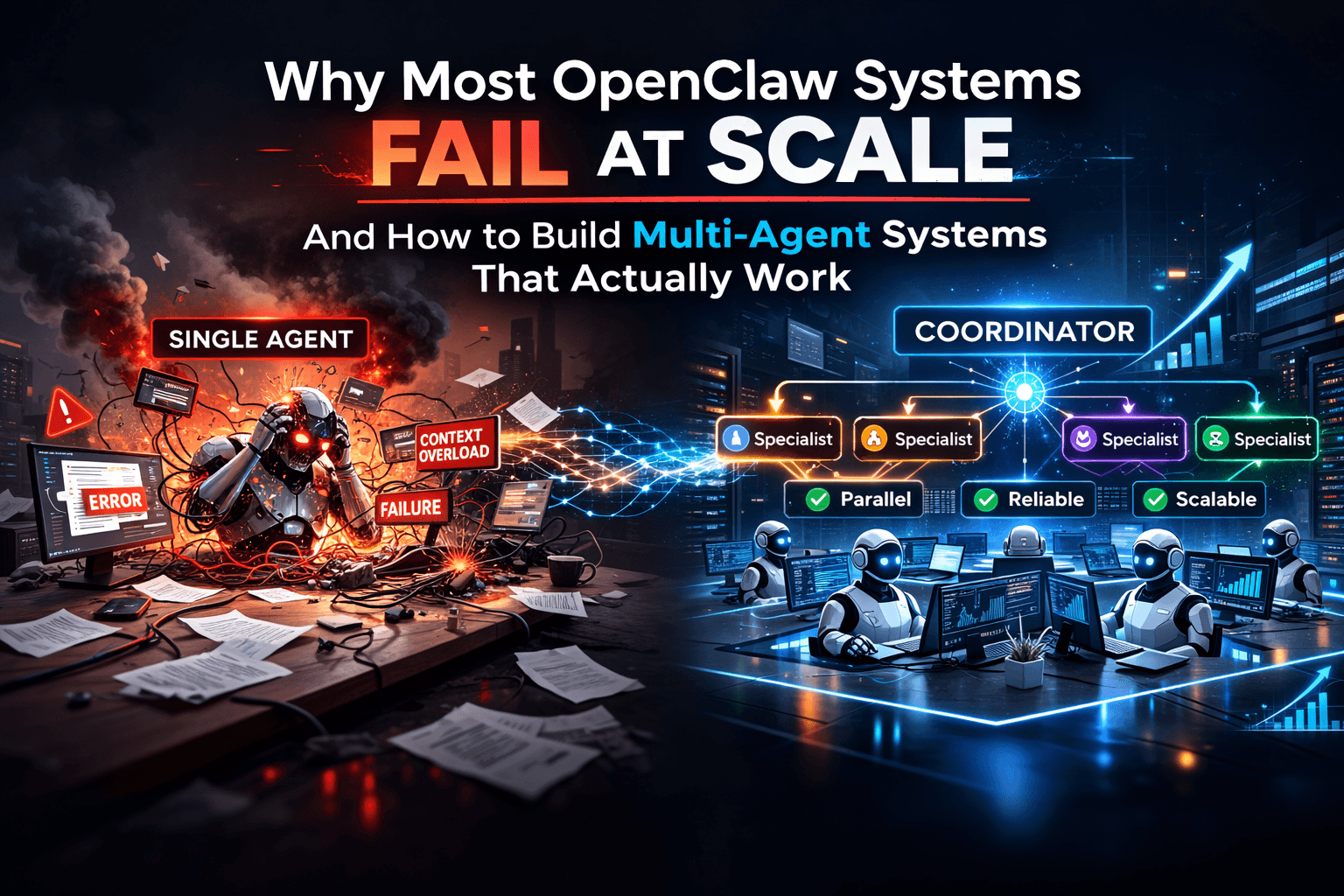

ADLC represents a fundamental reimagining of development where AI agents participate substantively in the workflow rather than serving merely as assistants. In ADLC, agents can autonomously execute development tasks within defined parameters, with humans providing strategic direction and quality evaluation at key points.

The core distinction: instead of humans driving every step, agents handle substantial portions of coding, testing, and deployment work. Humans shift from primary executors to reviewers, approvers, and intent-setters.

This doesn't mean humans become irrelevant—it means humans operate at a different granularity. Rather than writing every line of code, humans define what needs to be built and evaluate whether the agent's output meets requirements.

ADLC Stages

ADLC typically involves nine stages, though implementations vary:

Goal Definition — Humans specify the high-level objective: "Build a REST API for user authentication." This is less detailed than traditional requirements; agents infer specifics from context.

Build PRD — Agents help generate product requirement documents from goals, filling in technical details and edge cases.

Write Skills — Developers define agent capabilities, tool access, and prompting strategies that shape how agents operate.

Orchestrate Agents — A coordinator manages multiple specialized agents working in parallel—coding agents, testing agents, deployment agents.

Autonomous Coding — Agents write, refactor, and improve code within defined constraints, handling implementation details autonomously.

Autonomous Testing — Agents generate and run tests continuously, catching defects close to their introduction point rather than late in the cycle.

Manual Evaluation — Humans review agent output, making strategic decisions that agents cannot handle.

Deployment — Agents handle release pipelines, configuration, and production deployment.

Monitoring & Feedback — Continuous observation of deployed systems informs future iterations.

SDLC vs ADLC: A Comparison

| Aspect | Traditional SDLC | Agent-Driven ADLC |

|---|---|---|

| Execution Driver | Humans at every phase | Agents handle execution; humans direct |

| Planning Approach | Phase-sequential, detailed specs | Goal-oriented, iterative |

| Development Speed | Weeks to months per cycle | Hours to days for well-bounded tasks |

| Testing Process | Late, dedicated phase | Continuous, integrated with coding |

| Adaptability | Rigid; formal change control | Flexible; goal shifts redirect agents |

| Feedback Loops | Long (weeks) | Short (minutes to hours) |

| Human Role | Primary executor | Reviewer, approver, intent-setter |

| Knowledge Retention | Individual team members | Codified in agent configs and logs |

Benefits of ADLC

Teams adopting ADLC report several tangible advantages:

Faster Development Cycles — By automating code generation, test creation, and basic bug fixing, ADLC compresses the time from intent to working software. Tasks requiring days of developer effort can complete in hours.

Parallel Agent Workflows — Multiple agents work simultaneously on independent components. One agent writes database code while another implements API endpoints while a third generates tests. The orchestrator manages dependencies and ensures coherent integration.

Automated Testing at Scale — Agents generate comprehensive test suites that would be time-prohibitive for humans to create manually. This improves code quality and provides regression protection.

Reduced Repetitive Work — Routine code patterns, boilerplate generation, test scaffolding, and deployment automation free humans for higher-value architectural and strategic work.

Continuous Feedback — Immediate feedback between code generation and test execution maintains code quality and reduces rework from late-stage defect discovery.

Risks and Challenges

The benefits are real, but so are the risks. Ignoring them leads to disappointment:

Hallucinations — Agents can generate code that appears correct but contains subtle errors. Review cannot assume agent output is correct—it must be verified with the same rigor as human code.

Security Risks — Agents may introduce vulnerabilities—SQL injection paths, authentication bypasses, or sensitive data exposures—that escape casual review. Security-aware prompting and dedicated security testing are essential.

Debugging Complexity — When agent-generated code fails, diagnosing root cause is harder. Agents may use unconventional patterns or introduce subtle dependencies difficult to trace.

Over-Reliance — The convenience of agent assistance can lead to atrophy of human skills. Teams that stop writing code entirely may lose the ability to evaluate agent output critically.

Infrastructure Costs — Running agentic workflows requires compute resources. Cost-benefit depends on task characteristics, team size, and iteration frequency.

Cultural Adaptation — ADLC isn't just a tooling change—it's a workflow change affecting how teams communicate, review, and make decisions.

Conclusion

The shift from SDLC to ADLC represents a fundamental change in how software is built—not merely a tooling upgrade. ADLC offers tangible benefits in velocity, defect detection, and scalability, but introduces new categories of risk that organizations must actively manage.

For teams evaluating this transition, a pragmatic approach makes sense: start with low-stakes tasks where upside is clear and downside is limited. Gradually expand scope as confidence grows and guardrails mature.

The future of software development is unlikely to be purely human-driven or purely agentic. The most effective organizations will build hybrid systems where human intent and agentic execution combine—preserving human judgment where it matters most while leveraging automation where it adds the most value.