Vectorless RAG: Retrieval Without Embeddings

Why it performs better than conventional RAG systems

The Problem Nobody Talks About

Walk into any engineering team working on AI applications, and you'll find something remarkable: nearly every retrieval-augmented generation (RAG) system relies on the same underlying architecture. Vector databases have become the default answer to "how do we find relevant information?" Documents go in, vectors come out, semantic search does the rest.

But here's what's interesting. Some of the most sophisticated AI teams have started questioning this pattern. Anthropic's Claude Code doesn't use vector databases at all. When researchers tested approaches on FinanceBench—a benchmark for answering questions about financial documents—systems using vector RAG with GPT-4o achieved around 31% accuracy. A system called PageIndex, which uses hierarchical document structure instead of vectors, hit 98.7%.

That's not a marginal improvement. That's a fundamental difference in what the architecture can accomplish.

What We're Actually Trying to Solve

Before diving into why vector search struggles, let's be clear about the underlying problem.

You've got a large document—a 200-page insurance policy, a 500-page technical manual, an SEC filing with cross-references scattered across 30 exhibits. A user asks a specific question: "What is the total value of deferred assets in Q3?" or "Does this policy cover water damage from flooding?"

The naive approach—dump the whole document into the LLM's context—fails immediately. You'll hit token limits. You'll pay a fortune. And the model will lose focus, unable to concentrate on the relevant passage.

Traditional RAG was supposed to solve this: break the document into chunks, embed those chunks, retrieve the "most relevant" chunks based on vector similarity, feed them to the LLM.

Sounds reasonable. In practice, it's a minefield.

Traditional RAG: The Chunk-and-Embed Pipeline

Here's how standard RAG works, end to end.

Indexing phase:

Take a document

Split it into chunks (512 tokens, 1024 tokens, whatever you picked)

Run each chunk through an embedding model

Store vectors in a database (Pinecone, Weaviate, Chroma, pgvector)

Save the original text alongside the vector

Query phase:

Take the user's question

Embed it with the same model

Search the vector DB for "top-k" most similar vectors

Retrieve the original text chunks

Feed them to the LLM as context

Simple. Fast. And increasingly, insufficient.

The Chunking Problem Nobody Admits To

Chunking exists because we can't fit entire documents in context windows, and because naive full-text search doesn't capture semantic meaning well enough.

But chunking introduces problems that nobody really talks about in the happy-path blog posts.

Semantic boundary violation: A chunk might start mid-sentence, mid-paragraph, or mid-thought. A clause that starts in chunk 7 continues in chunk 8, but chunk 8 retrieves independently, and suddenly the LLM is reading sentences without their antecedents.

Consider a legal document: "Section 4.2.1: Exclusions. Notwithstanding anything to the contrary in Section 3.1, the following items are explicitly excluded from coverage under this policy: (a) flood damage, (b) earthquake damage, except as provided in Appendix C..."

If chunking splits this at "(a) flood damage," the LLM might retrieve that chunk and never see "Section 3.1" or "Appendix C." It answers the question about exclusions without understanding what they're exceptions to.

Context fragmentation: Related information gets scattered across chunks. A table on page 45 and its footnote on page 112 might be semantically related but retrieved in isolation.

When Vector Similarity Fails

Embedding-based retrieval assumes that semantic similarity correlates with relevance to the query. This works surprisingly well for simple, self-contained questions.

"What is the melting point of titanium?" → Chunks about titanium properties are semantically similar. Vector search handles it fine.

But many real questions don't work that way.

Multi-hop reasoning: "Were there any material changes between Q2 and Q3?" requires comparing information across sections. Vector search retrieves chunks similar to either quarter's text, but doesn't reason about the relationship.

Cross-referenced information: A query asks for "total value of deferred assets." The answer isn't in the main filing body—it's in Appendix G, referenced by footnote 14. Vector search might miss this connection.

Precise numeric retrieval: "What was the exact figure for accounts receivable in FY2024?" Vector similarity will find text about it, but might retrieve a chunk discussing trends rather than the specific number.

The core issue: similarity != relevance. Two pieces of text can be semantically similar without answering the question being asked.

Why We Need a Different Approach

When Anthropic's Claude Code team rebuilt their codebase understanding system, they didn't use vector databases. They went structure-first.

When PageIndex was tested on FinanceBench—150 questions across 30 real SEC filings—it crushed vector RAG. 98.7% versus 31%.

These aren't marginal gains. They're evidence that a fundamentally different architecture is viable.

Vectorless RAG: Structure-First Retrieval

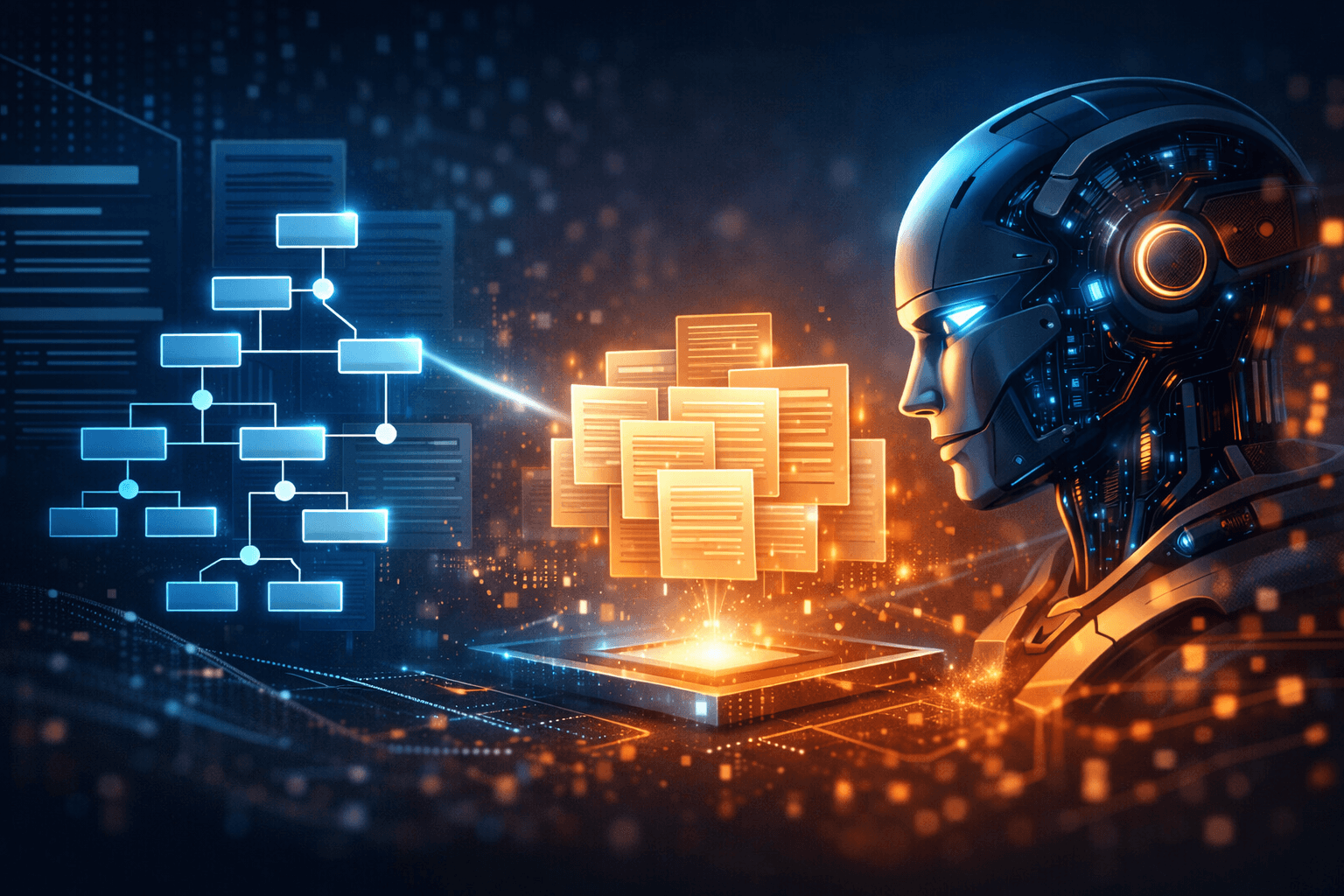

Vectorless RAG (also called Page Index) is a retrieval paradigm that inverts the default assumption. Instead of embedding documents and searching for similarity, it:

Parses the structure of documents (headings, sections, tables, cross-references)

Builds a hierarchical representation of that structure

Uses reasoning to navigate the structure based on the query

The core shift: structure first, content second.

The Hierarchy: Representing Documents as Trees

Every well-structured document has inherent hierarchy.

A textbook has parts, chapters, sections, subsections. A legal contract has clauses, sub-clauses, definitions, exhibits. An SEC filing has the main document, footnotes, appendices.

Vectorless RAG makes this hierarchy explicit by building a tree.

Root node: The document itself. Branch nodes: Major sections (e.g., "Part I," "Item 1: Business"). Intermediate nodes: Subsections (e.g., "1.1 Overview," "Item 1A: Risk Factors"). Leaf nodes: Actual content—paragraphs, tables, figures.

Each node stores:

A summary (generated by an LLM during indexing)

A pointer to the original content

Metadata (section number, depth level, content type)

The key insight: cross-references (like "see Appendix G") become explicit edges in the tree.

Building the Tree: The Indexing Pipeline

Step 1: Parse raw content. Extract text, headings, tables, figures using PDF parsers.

Step 2: Detect section boundaries. Using heading styles or explicit numbering.

Step 3: Assign depth levels. Map sections to tree depth based on hierarchy.

Step 4: Generate node summaries. LLM generates 2-3 sentence summaries for each node.

Step 5: Detect cross-references. Parse "see Appendix A," "as described in Section 4.2."

Step 6: Assemble the tree. Connect nodes according to hierarchy and cross-references.

Retrieval: Reasoning-Based Traversal

Instead of "embed query, find similar vectors," you run a reasoning loop:

Evaluate the root. LLM reads root summary and decides which sections to explore.

Breadth-first descent. At each level, evaluate sibling nodes and select the most promising.

Use cross-reference edges. Traverse explicit edges during reasoning.

Collect at leaf nodes. Retrieve content relevant to the query.

Generate the answer. LLM generates response grounded in retrieved content.

Query Execution Flow

User asks: "What was the total executive compensation for the CEO?"

Tree evaluation: LLM reads root → selects "Executive Compensation" → descends

Node selection: LLM evaluates child nodes → selects "CEO Compensation Details"

Cross-reference handling: Traverses to "Summary Compensation Table"

Content retrieval: Retrieves relevant paragraphs, table data

Answer generation: LLM synthesizes answer from structured context

The Human Analogy

Think about how you would find information in a physical book.

You wouldn't randomly sample pages hoping to find something relevant. You'd look at the table of contents, identify the likely chapter, scan the section headings, and drill down.

Vector RAG is like randomly sampling pages. Structure-first retrieval is like using the table of contents.

Vector vs Vectorless RAG: A Comparison

| Aspect | Vector RAG | Vectorless RAG |

|---|---|---|

| Retrieval Method | Embedding similarity search | Structure-based reasoning |

| Data Structure | Flat vector space | Hierarchical tree |

| Context Preservation | Lost across chunk boundaries | Preserved in structure |

| Query Robustness | Sensitive to phrasing | Resilient to wording variations |

| Cross-References | Invisible to retrieval | Explicit edges in tree |

| Multi-hop Reasoning | Limited | Native support |

| Latency | Low | Higher (LLM reasoning loops) |

| Cost Model | Embedding + vector DB | LLM calls during indexing |

| Maturity | Well-established | Emerging |

| Scalability | Excellent | Good |

| Explainability | Low (black-box) | High (structured path) |

Advantages of Vectorless RAG

Structural integrity: Document structure is preserved and exploited, not destroyed by chunking.

Semantic coherence: Content stays connected to its context, headers, and related sections.

Query robustness: The system doesn't break when users phrase questions differently.

Better multi-hop reasoning: The tree structure makes connecting information across sections natural.

Auditability: You can trace exactly where the answer came from by following the traversal path.

Trade-offs and Constraints

Higher latency: LLM-based traversal involves multiple model calls. For real-time applications, this matters.

Increased compute cost: Each traversal step involves an LLM call.

LLM dependency: Quality depends on the LLM's ability to reason about structure.

Engineering complexity: Building a tree indexer requires more work than "chunk and embed."

Not universally better: Vector search excels at large-scale retrieval. Vectorless RAG shines for deep retrieval within individual documents.

Caching helps: Summaries can be cached rather than regenerated.

When to Use Vectorless RAG

Good fit:

Legal documents: Contracts, compliance filings, regulatory documents

Financial reports: SEC filings, annual reports, audit documents

Technical manuals: API documentation, user guides, specifications

Books and long-form content: When narrative flow matters

Structured corpora: Documents with clear hierarchical organization

Bad fit:

Real-time systems: Where milliseconds matter

Unstructured/noisy data: Chat logs, social media

High-throughput APIs: Millions of queries per day

Small, simple documents: Where chunking works fine

Implementation Blueprint

Building a Vectorless RAG system:

Data Pipeline:

Document → Parser → Section Detector → LLM Summarizer → Cross-Ref Detector → Tree Assembler → Tree Store

Storage: Tree structure (JSON or graph), node-to-content mapping, cached summaries

Query Engine:

Query → Tree Root → LLM Router → Traversal Controller → Content Retriever → LLM Answer Generator

Tools: Python-based stack, LangChain (tree agents), LlamaIndex (tree indexing), Neo4j for complex graphs

Hybrid RAG: The Emerging Pattern

The most promising production architectures combine both approaches:

Vector search for coarse retrieval: Quickly narrow to top 100 candidates from 10,000 documents

Structure-aware retrieval for precision: Within those candidates, narrow to the precise section

LLM generation from structured context: Final answer grounded in precisely retrieved content

Future Outlook

LLM reasoning capabilities are improving. Models are getting better at understanding structure and following cross-references.

The reliance on embeddings is declining. New benchmarks are challenging the "embed everything" orthodoxy.

Structured knowledge is becoming central. As LLMs become better reasoners, structure-first approaches are better positioned.

Hybrid architectures will dominate production. Pure vector or pure tree retrieval will give way to intelligent combinations.

Conclusion

The shift from similarity-based retrieval to structure-based retrieval represents a fundamental change in how we think about RAG systems.

Vector search optimized for a world where we needed to approximate meaning with embeddings. Now that LLMs can reason about structure, navigate documents, and follow cross-references, we can build retrieval systems that actually understand where information lives—not just what text sounds similar.

The core message: from similarity-based retrieval to structure + reasoning-based retrieval.

Vector search isn't going away—it excels at large-scale semantic retrieval. But for the long documents, complex queries, and precision requirements that define enterprise RAG, structure-first approaches offer real advantages.

The 98.7% accuracy on FinanceBench isn't a fluke. It's evidence that when you build retrieval systems around how documents are actually structured, you get fundamentally better results.