Why Most OpenClaw Systems Fail at Scale (and how to fix them)

From personal experience

Here’s an uncomfortable truth.

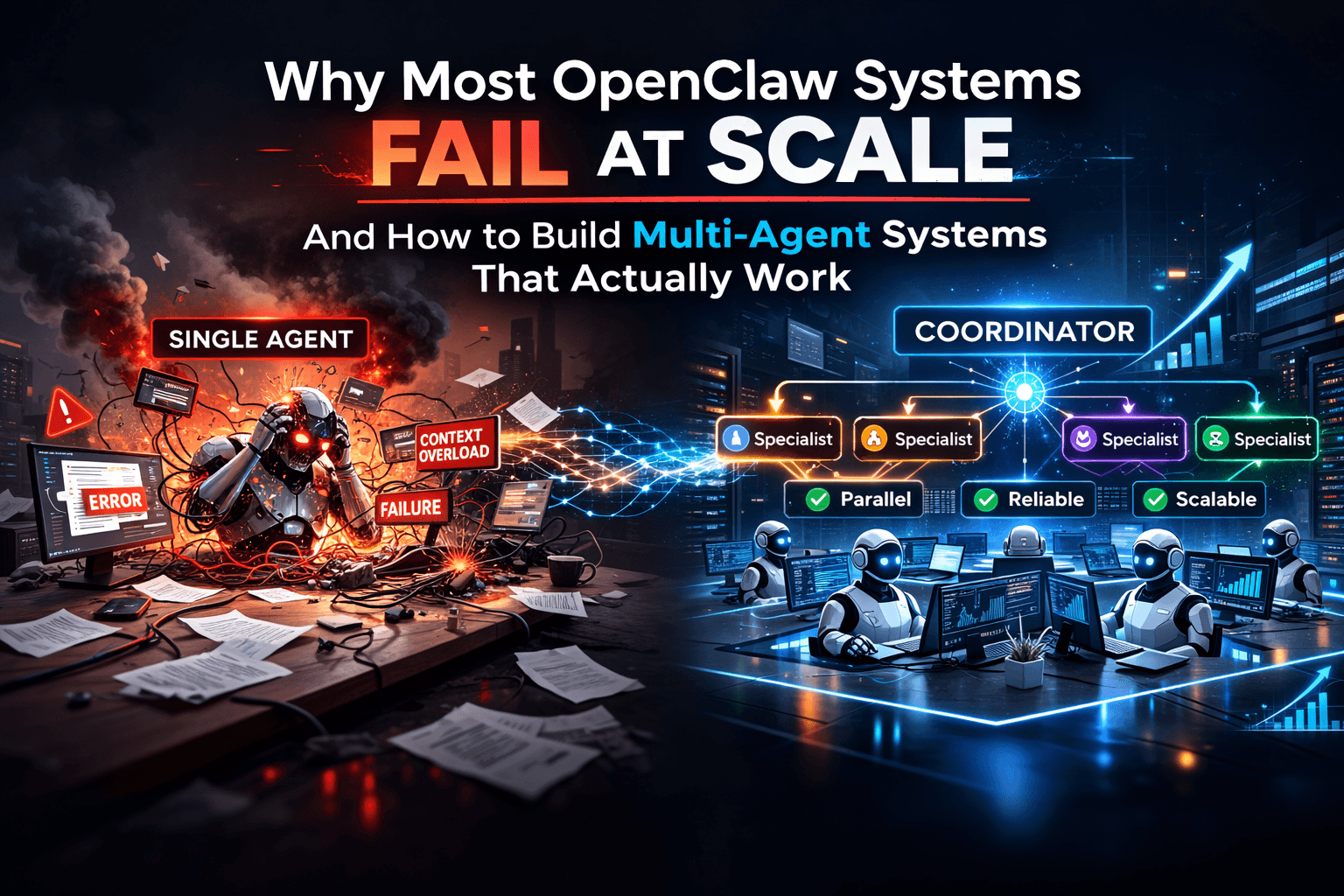

Most engineers experimenting with OpenClaw start by building a single-agent system. It works beautifully at first. Prompts behave well. Workflows feel simple. The architecture seems clean.

Then the system grows.

More tasks.

More tool calls.

More context.

More automation.

And suddenly things start breaking in strange ways.

Prompts that worked yesterday start producing inconsistent results. Context gets bloated. Tool responses clutter the conversation. A single failure derails the entire workflow.

This isn’t a flaw in OpenClaw. It’s a limitation of how many engineers structure their agent architecture.

Single-agent workflows are great for prototypes. But when systems grow, they often need a different structure.

The Five Problems That Appear in Large Single-Agent Systems

1. Context Window Pressure

LLMs technically process the entire context window, but very large prompts tend to degrade performance.

As conversation history grows, the model must reason across:

system prompts

tool responses

intermediate outputs

previous reasoning steps

task instructions

When large amounts of text accumulate, models often rely more heavily on recent information and may become less consistent in following earlier instructions.

This is especially noticeable when agents run long workflows with many tool interactions.

2. Prompt Pollution

Every tool call usually returns data.

That data often gets added to the agent’s history.

Over time the prompt can accumulate:

verbose API responses

logs

JSON payloads

intermediate reasoning

The model then starts reacting to historical outputs instead of the current task.

This phenomenon is sometimes called prompt pollution — when accumulated history interferes with new decisions.

The result is slower responses, higher token costs, and less reliable outputs.

3. Sequential Execution Bottlenecks

Many first implementations of agent workflows end up sequential by design.

The agent:

completes task A

then performs task B

then performs task C

This works fine for small pipelines.

But imagine a workflow that needs to:

call five APIs

process results

run analysis

generate a report

If everything happens sequentially, the system becomes unnecessarily slow.

Architectures that allow parallel work can dramatically improve throughput.

4. Shared State Risks

When everything runs inside a single conversation or agent session, all tasks share the same state.

This means:

mistakes propagate

corrupted context affects future steps

debugging becomes harder

Separating work into independent sessions or agents can help contain failures and simplify recovery.

5. Growing Coordination Complexity

As workflows grow, a single agent ends up doing too many jobs:

task planning

execution

tool selection

state management

result synthesis

This increases prompt complexity and often leads to fragile instructions.

Breaking responsibilities across specialized agents can make systems easier to reason about.

A Common Scaling Pattern: Coordinator + Specialists

One architecture pattern many engineers adopt is a coordinator-specialist model.

Instead of a single agent doing everything:

a coordinator agent receives tasks and decides how to handle them

specialist agents perform specific types of work

For example:

Coordinator receives a request:

“Generate a weekly market report”

The coordinator might:

spawn a data collection agent

spawn a data analysis agent

spawn a report writing agent

Each specialist performs one job and returns results to the coordinator.

Why This Pattern Works Well

Context Isolation

Each specialist operates in its own session.

This prevents large histories from polluting other tasks.

Parallel Execution

Multiple specialists can work at the same time.

For example:

five API calls can run concurrently

multiple analysis tasks can run simultaneously

This dramatically improves workflow speed.

Failure Containment

If one specialist fails, the coordinator can:

retry the task

spawn a replacement

continue with partial results

The entire system doesn’t have to fail because one component did.

Cost Optimization

Not every task requires the same model.

You can run:

simpler specialists on smaller models

complex reasoning on larger models

This helps control token costs.

Clearer Responsibilities

Each agent has a focused role.

Examples:

data-fetcher

summarizer

report-generator

classifier

Smaller prompts and clearer responsibilities often produce more reliable outputs.

Designing Specialists Correctly

Specialist agents work best when they are narrow and focused.

Instead of giving them every tool, provide only what they need.

Example concept:

const dataFetcherAgent = {

name: "data-fetcher",

tools: [

"http_request",

"json_parse",

"data_transform"

]

}

This follows the principle of least privilege.

The agent only has access to the tools required for its job.

Delegating Work with Spawned Sessions

In OpenClaw, agents can create new sessions and delegate tasks.

Conceptually the workflow looks like this:

Coordinator Agent

│

├── Data Fetcher Agent

│

├── Analysis Agent

│

└── Report Writer Agent

Each session runs independently and returns results back to the coordinator.

This allows orchestration logic to remain simple while work happens in parallel.

Common Mistakes Engineers Still Make

Even with multi-agent architectures, some problems appear repeatedly.

Giving specialists too many tools

Over-permissioned agents increase risk and complexity.

Passing excessive context between agents

Large context handoffs increase token cost and reduce performance.

Specialists should receive only the information required for their task.

Making specialists too generic

Agents that try to do many different things become harder to control.

Smaller roles usually work better.

Ignoring failure handling

Coordinators should be able to:

retry failed tasks

handle timeouts

continue with partial results when necessary

The Real Cost of Multi-Agent Systems

Multi-agent architectures introduce coordination overhead.

Every delegation involves:

passing context

receiving results

synthesizing outputs

This increases token usage compared to a single-agent system.

But in practice the benefits often outweigh the cost:

better reliability

improved parallelism

clearer architecture

easier debugging

The key is designing specialists to be small, focused, and efficient.

What Most Engineers Eventually Discover

Single-agent workflows are perfect for experiments and small automations.

But as systems grow, complexity increases.

At that point, introducing coordination and specialized agents often makes the system easier to scale and maintain.

The goal isn’t to build the most agents possible.

It’s to design systems where each component has a clear responsibility and minimal context.

That’s where agent-based systems tend to perform best.